3 Natural Language Processing use cases for analyzing survey responses

Say you ran a survey and collected responses from 1,000 individuals.

You’ve included two open-ended questions in your survey and all 1,000 of your respondents answered them, using 15 words each.

Using simple arithmetic, you’ll find that you’ve collected 2,000 open-ended responses (2 * 1,000) that totaled 30,000 words (2,000 * 15).

With such a daunting amount of text to read, how can you reasonably expect to review and identify the key insights from your responses?

The answer to both of these questions involves the use of Natural Language Processing, often referred to as NLP, which is essentially the process of using computers to help understand large amounts of text data.

Throughout this page, we’ll provide an introduction to Natural Language Processing and discuss how to use it to help review your survey results. By the end, you’ll have an idea of how to use Natural Language Processing in your future surveys.

Introduction to Natural Language Processing

Natural Language Processing is a field where computer programming and machine learning techniques attempt to understand and make use of large volumes of text data.

Natural Language Processing offers hundreds of ways to review your open-ended survey responses. Unfortunately, you don’t have the time to review each of these applications and decide on the best one.

We’ll fast-track your review process by walking you through 3 of the most popular Natural Language Processing use cases.

The word cloud

The word cloud allows you to identify the relative frequency of different keywords using an easily digestible visual.

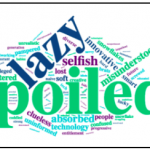

For example, in a previous study, we’ve asked Americans to describe millennials in a single word. Their responses led to the following word cloud:

The bigger words in the chart appear more often in responses relative to the other words. In this case, these words tend to be negative—e.g. “lazy” and “spoiled.”

Now that you know how it works, you might be asking yourself, “How do word clouds help my survey analysis?”

Here are some of its key benefits:

- It’s intuitive and easy to comprehend

- It helps identify overall respondent sentiment and the specific factors that drive it

- It provides direction for further analysis

But here are some of its drawbacks to consider:

- It fails to measure each word’s value in and of itself

- It allows irrelevant words to appear

- When words appear similar in size, it becomes difficult to differentiate them

TFIDF (term frequency–inverse document frequency)

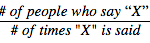

TFIDF focuses on how unique a word or a group of words are from a set of responses. It’s calculated as follows:

The closer the number is to 1, the more important the word becomes. What’s the reasoning behind this formula? If more people say something but don’t necessarily say it frequently, it’s easily neglected or missed—despite its value to your analysis. TFIDF solves this challenge by highlighting the most important unique words or group of words.

For example, let’s say we gathered responses from the question: “If you had $1,000 and you could save it, invest it, or use it to pay off bills, what would you do with it?”

We end up finding that many young adults would spend the money on school-related expenses as words like, “tuition” and “buying textbooks” have a high TFIDF rating.

Use TFIDF when you want to…

- Drill down on the unique words that are used by a large sample of respondents

- Identify a theme to focus on

- Easily compare the relevance of a word or a group of words to others

Just keep the following pitfalls in mind…

- The voices of a few respondents can get buried and neglected

- If many respondents say something, but say it often, that word or group of words can receive a score that isn’t representative of its significance

- When something is said by only a few respondents, infrequently, that word or group of words can receive a score that overstates its importance

Topic modeling

Topic modeling is an advanced natural language processing technique that involves using algorithms to identify the main themes or ideas (topics) in a large amount of text data. Topic modeling algorithms examine text to look for clusters of similar words and then group them based on the statistics of how often the words appear and what the balance of topics is.

As a result, topic modeling helps you understand the key themes from your survey responses as well as the relative importance of each theme.

Let’s use a hypothetical situation to showcase topic modeling in action:

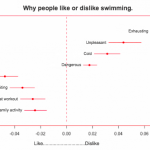

Let’s say we asked respondents whether or not they like swimming. We followed up with an open-ended question where the respondent can explain their answer. Our topic model produces the following chart, based on the clusters of similar words that appear in the open-ended responses.

Eight main topics emerge, based on the frequency of word clusters that appeared in our open-ended responses. Since we used a 95% confidence interval, there’s some variability in the weight of each topic, which the lines on either side of the topic represent.

As you can see, the topic clusters that appear for respondents who said they don’t like swimming are negative, while the ones who said they like swimming are positive. In our example above, “exhausting” was the most relevant topic when respondents disliked swimming. Meanwhile, “fun” was the most applicable topic when respondents said they liked swimming.

How does topic modeling help your survey analysis?

- Identifies key topics that drive the respondent’s sentiment in a certain direction

- Helps you understand each topic’s level of influence

- Produces an intuitive and easy to understand visual

Here are some of its shortcomings:

- Doesn’t account for the significance of each topic in and of itself

- The survey creator specifies the number of topics they’d like to have in advance. This easily leads to human error; choosing an excessive number of topics creates less valuable ones while choosing an insufficient number leaves out potentially important topics

- Becomes overwhelming and less meaningful if too many key topics are chosen

Deciding on the right application of Natural Language Processing isn’t simple. But choosing between these 3 use cases makes the process much easier. So go forward and embrace your free responses with confidence. You’ll uncover any and all of the key insights they provide.

See how SurveyMonkey can power your curiosity

Discover more resources

Solutions for your role

SurveyMonkey can help you do your job better. Discover how to make a bigger impact with winning strategies, products, experiences, and more.

Maximize growth with powerful enterprise feedback management software

Scale insights with SurveyMonkey enterprise feedback management system. Use AI surveys & omnichannel data to drive secure, scalable growth.

How to use feedback to build an AI governance strategy

Learn how to use feedback to build an acceptable AI usage policy.

Product deep dive: Collect

Learn how to use each survey collector to achieve your outcomes.