It will come as no surprise that the more questions you ask, the fewer respondents who start a survey or questionnaire will complete the full questionnaire. If you want or need to receive responses from a certain number of respondents, and you have a limited audience or sample size that you can get to start your survey, the fewer questions you ask, the better. In many cases, surveys may not be able to be condensed into just a few questions, but when response rates matter, keeping surveys succinct can help.

Market research you can get done today

Looking for a fast and easy market research solution? Meet our global consumer panel SurveyMonkey Audience.

We analyzed response and drop-off rates in aggregate across 100,000 random surveys conducted by SurveyMonkey users to understand what drop-off rates looked like as the length of surveys increased. We looked at both the number of questions and number of pages. We wanted to understand the drop-off rate from start to finish for surveys from 1 to 50 questions to see how drop-off rates correlated with each incremental question per survey. The qualifier, however, was that the respondent had to submit a response on at least 1 page. To conduct our study, we looked at 2,000 random surveys with 1 question, 2,000 with 2 questions, 2,000 with 3 questions, etc. all the way up to 2,000 surveys with 50 questions.

So what did we find?

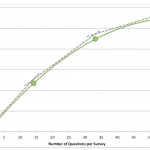

As expected, the more questions per survey, the higher the respondent drop-off rate from start to finish. However, as can be seen in the graph below, the relationship between survey length and drop-off rate is not linear. Data suggests that if a respondent begins answering a survey, the sharpest increase in drop-off rate occurs with each additional question up to 15 questions. If a respondent is willing to answer 15 questions, our data suggests that the drop-off rates for each incremental question, up to 35 questions, is lower than for the first 15 questions added to a survey. For respondents willing to answer over 35 questions in a survey, our data suggests they may be indifferent to survey length, and are willing to complete a long survey (within reason of course—we limited our analysis to surveys with 50 questions and below—we didn’t tackle the really, really long surveys we’ve seen this time around).

The chart above is based on random, aggregated survey and completion rate data from surveys deployed between January 2009 and September 2010. 2,000 random surveys with each number of questions (1-50) were included (100,000 surveys in total). A survey was considered to be “started” if a user submitted at least one page of data that included 1 response to a question, hence the 100% completion rate for any survey with 1 question. A survey was considered to be “completed” if they reached the end of the survey and clicked submit, skip logic may have resulted in users not answering the maximum number of questions in any given survey.

So what does this mean, from a practical perspective?

- If you are trying to optimize for completed survey responses, try to keep your survey short.

- The incremental value of each question should be worth the possible drop in response rates.

- Skip logic can help shorten a survey to allow respondents to navigate to only to survey questions relevant to them.

Of course, each survey, audience, and distribution method is different, and results will vary widely between surveys, but keep these metrics in mind as you design your next survey.

Have you experienced higher or lower drop off rates in your surveys as you varied the number of questions? We’d love to hear your thoughts on this data as well as any other data that you’d find helpful.

Written by Brent Chudoba, SurveyMonkey’s Vice President of Business Strategy & Business Intelligence. As the largest source of online survey distribution and response collection in the world, SurveyMonkey’s data insights come from reviewing aggregate data across millions of new responses per day across hundreds of thousands of live surveys. Our goal is to share aggregate analysis and insights to help our customers be more effective and successful in conducting surveys.