The ultimate guide to building effective customer feedback programs

Use customer feedback to build stronger products and create better customer experiences.

A staggering 89% of CX pros believe customer experience is the leading contributor to churn. Investing in customer feedback programs is an investment in your company's longevity and success.

Gathering customer feedback allows an organization to confidently hone its products, services, and customer interactions. Rather than making inferences blindly, feedback provides a roadmap to creating better customer experiences (CX).

While there are distinctions between business-to-business (B2B) and business-to-consumer (B2C) feedback programs, the main pillars of a great strategy remain the same. In our ultimate guide to building effective customer feedback programs, we’ll dive into everything you need to know to create winning customer experiences, no matter your business model.

Chapter 1

Customer feedback definition, importance, and role in business

Customer feedback is invaluable for any business in today's competitive market. It provides direct insight into what customers think about your products or services, highlighting what you are doing well and uncovering areas for improvement. By understanding customer needs and preferences, you can make informed decisions that enhance their overall experience, drive innovation, and build customer loyalty.

What is customer feedback?

Customer feedback is the information, opinions, and insights customers provide about their experiences with a company’s products or services. It can be positive, neutral, or negative, including reviews, reactions, complaints, suggestions, and observations about products, services, and interactions. Collecting and analyzing this feedback helps businesses understand customer needs, identify areas for improvement, and enhance customer satisfaction.

Why is customer feedback important?

Customer feedback is vital as it offers you clear insight into how your customers feel and what they want to see in the future. When you understand what your customers want, you can make effective decisions to improve their experience.

Effectively collecting, responding to, and implementing customer feedback allows your business to:

- Better meet customer needs

- Identify areas to improve

- Measure customer satisfaction

- Reduce churn and increase retention

- Make better business decisions

- Drive innovation and new product development

- Differentiate from the competition

- Build customer trust and loyalty

Related reading: Tips and resources for building a better customer experience

Chapter 2

The importance of customer feedback in business

Organizations need full support from leaders and teams across their business to develop an effective customer feedback program. Although you can share facts like 91% of customers will share a positive experience with their friends and family, it also helps to get real-world data from your business.

Here’s how you can get stakeholders at every level on board with creating a customer feedback program.

Identify program stakeholders

The first step in building a customer feedback program is identifying who will be involved. For example, your stakeholders may include:

| Team | How they use feedback |

| Frontline employees | • Improve customer interactions and troubleshooting techniques• Relay on-the-ground insights to leadership |

| Customer support | • Improve response times and support satisfaction levels• Identify common pain points and develop processes to reduce customer friction |

| Customer success | • Develop strategies to prevent churn and foster long-term customer loyalty• Offer ongoing assistance and training to ensure customers reach their intended goals |

| Product development | • Generate new product or feature ideas• Prioritize product launches• Implement improvements based on identified bugs and pain points |

| Marketing | • Tailor campaigns to customers’ needs & preferences• Refine product positioning in the market |

| Sales | • Adjust communication strategies to meet customer needs better• Adapt sales strategies to align with evolving customer preferences and market trends |

| Leadership | • Prioritize initiatives, allocate resources, and shape long-term business strategies• Build a customer-centric culture• Measure performance and identify areas of improvement |

Obtaining buy-in from executive leadership

If executives and senior management don’t consider your program mission-critical, you won’t get the resourcing and funds you need to ensure its success. To show why your program matters, you must demonstrate how collecting and acting upon customer feedback delivers results that leadership will love.

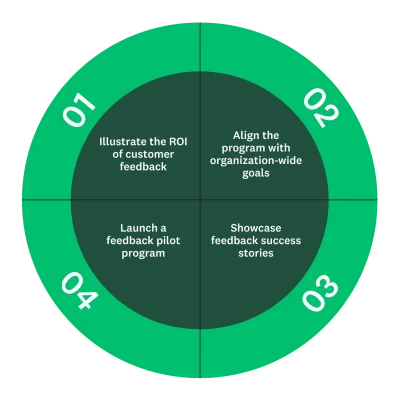

Here are four methods to show your leadership why investing in your feedback program is vital across every stage of the customer journey.

1: Illustrate the ROI of customer feedback

The easiest method to get your executive team on board when implementing a customer feedback program is to show demonstrable profit impacts. Showing the potential ROI of customer feedback programs will clarify why your business should invest in CX.

Related reading: 10 strategies to maximize the ROI of your CX programs

2: Align the program with organization-wide goals

Another useful way to increase the appeal of a customer feedback program is to demonstrate how it aligns with your organizational goals. Your feedback program should not be at odds with your goals, nor should it seem like a new and random pursuit. Develop a clear connection between the feedback program and your organization's broader goals.

3: Launch a feedback pilot program

A pilot program will help test the efficacy of your feedback initiatives before launching a widespread integration. Running this pilot scheme over three or six months gives you plenty of data to show why it's time to expand to a full-scope implementation.

4: Showcase feedback success stories

Luckily for everyone, customer feedback programs aren’t something new. The biggest and best companies around the globe have been using these for decades. With that in mind, there are plenty of third-party case studies that you can turn to.

Read SurveyMonkey success stories to learn how top brands leverage customer insights to deliver a better customer experience.

Chapter 3

Defining goals, objectives, and KPIs

Building an effective, centralized, and systematic customer feedback program from scratch isn’t simple. You must define goals, objectives, and key performance indicators (KPIs) to bring your customer feedback program to life.

Define customer feedback program objectives

To justify the need for a customer feedback system, it is essential to establish a target for your program that aligns with your company's goals. Start by setting a clear and achievable objective before proceeding with any other steps.

Example of CX program goal, objective, and KPI

- Goal: Enhance customer satisfaction

- Objective: Increase Net Promoter Score (NPS®) by 15% by Q4

- KPI: NPS score

We aim to increase NPS by 15% over the next six months. To achieve this, we'll launch transactional and relational NPS surveys to track changes in this metric. Continuous monitoring and responding to feedback will help us identify improvement opportunities and implement them. After six months, we’ll evaluate the effectiveness of our customer satisfaction initiatives.

Related reading: How to use NPS surveys to create the best customer experience

Chapter 4

Collecting customer feedback

In this chapter, we will explore the various methods of collecting customer feedback, the importance of analyzing this feedback, and how to effectively implement changes based on the customer insights you yield.

Types of customer feedback

Here are the main types of customer feedback your business should be familiar with:

- Solicited and unsolicited feedback: Solicited feedback is actively sought out from customers through surveys, feedback forms, and direct requests for opinions, and can be tailored to address specific areas of interest. Unsolicited feedback is provided by customers unprompted through social media comments, online reviews, and emails, offering honest and raw insights into customer sentiments.

- Satisfaction and loyalty: Feedback on overall customer loyalty and satisfaction rates will reveal how your customers relate to your business. Check out these questions to ask customers.

- Customer service support: By asking for customer feedback after support interactions, you can gauge how effective your customer service team is and where they could improve. This customer service research can be extremely helpful.

- Website and in-app feedback: Your business can implement live feedback modules on your site or app. Whenever a customer interacts with a certain touchpoint, you can automatically trigger these feedback forms to load. This form of feedback will demonstrate how smooth your website or app experience is and whether there are any points of friction along the customer journey.

- Sales feedback: After completing a purchase, your customers can provide feedback to your business, commenting on how easy the process was. This post-purchase feedback is useful for learning more about the success of your sales process.

Determine feedback channels to gather insights

There are numerous channels for collecting customer feedback. Engaging with customers across several channels offers your business an extensive and nuanced feedback base.

Here are the top feedback channels you can use.

| Channel | Description |

| Surveys | Surveys are one of the most effective feedback collection methods available to a business. There is an endless library of different survey templates that you can use to get precisely the feedback you need. |

| Customer interviews | Customer interviews allow you to conduct a deeper level of qualitative research. Chatting with a customer in a 1-1 format ensures that the conversation flows in a direction that’s useful for your business. |

| Focus groups | Focus groups offer the high level of qualitative detail that customer interviews have but expand them to larger conversations with more people. Focus groups with 8-20 customers allow your business to rapidly explore your customers' perceptions, attitudes, and preferences. |

| Market research | Market research is another powerful way of collecting customer feedback. While wider research won’t directly discuss your products and services, it can give you an insight into what the industry thinks. |

| Social media monitoring | By monitoring your social media mentions (both tagged and untagged), you can scour different platforms for real-time feedback on your company. You can combine these responses with NLP sentiment analysis tools to turn qualitative feedback into quantitative research. |

Related reading: How to get started conducting market research

Determine the right timing and frequency for collecting feedback

Another important aspect to consider when collecting feedback is how frequently you send feedback requests. Too infrequently, and you won’t have enough data to draw insightful conclusions from. Too often, you’ll overwhelm your audience, reducing their willingness to leave feedback.

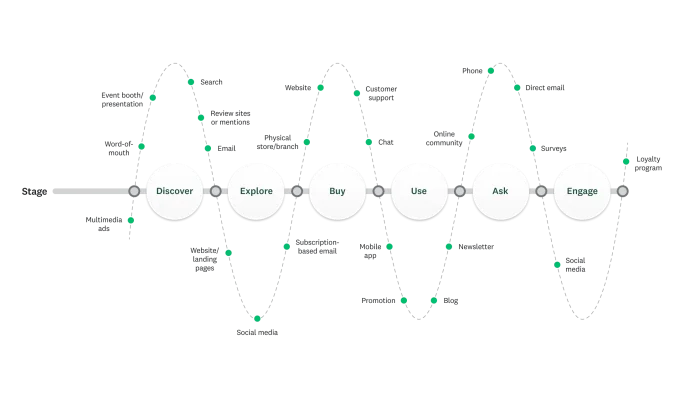

Collect feedback throughout the customer journey

Your business can collect customer feedback across the entire customer journey. Understanding the most important touchpoints in this journey and which are useful to monitor will help you create effective feedback request strategies.

By putting yourself in the customers' shoes, you can trace your customer journey and identify the most critical moments. Combining this with customer journey mapping will enable you to get feedback right when it's most critical.

Chapter 5

Analyzing and implementing feedback

Drawing insight from your customer feedback provides actionable steps you can integrate to improve. By constantly implementing feedback and requesting more feedback on your new features, you create effective customer feedback loops.

Identify feedback trends and themes

Continually collecting customer experience feedback over time and monitoring changes in your CX metrics allows you to gauge whether your feedback program has a positive effect. You can also actively benchmark your data to determine how your customer experiences shift over time.

Using filters in your data helps you pinpoint specific trends. A filter is a survey analysis tool that lets you focus on particular subsets of your data to see how specific groups respond to your questions.

Use visualizations

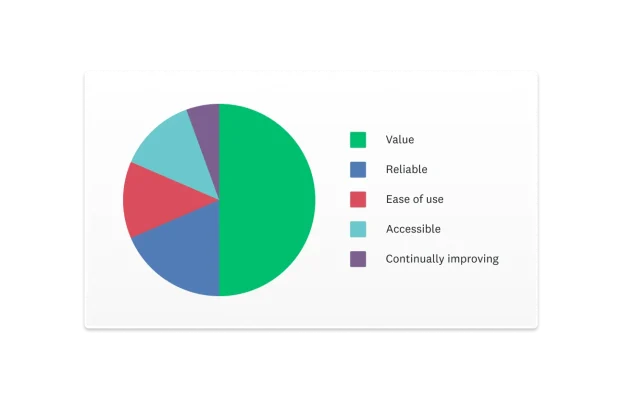

Charts can help you visualize your results and convert complex data into an easily digestible format. For example, you could use a multiple-choice survey question to ask respondents what they like about using your product.

A bar chart, like the following, could help you make subtle comparisons across your answer choices:

Though close, you can see that value beats out the other options as your top differentiator.

Now, if you want to know what customers like most about your product, you can use a pie chart. This type of chart works great in accentuating the differences in popularity among choices:

This time around, it’s clear that value beats out the other options. There’s nuance in creating different kinds of charts. For example, a line/area graph can be created from a multi-select closed-ended question, while pie/donut charts can only be used from a closed-ended question that only allows a single answer.

Related reading: When and how to use SurveyMonkey’s most popular chart types

Compare data from different customer touchpoints

When exploring related data across distinct customer touchpoints, you may encounter stark differences. Let’s say you were exploring satisfaction rates for customer service support via phone calls and web chat.

If these two touchpoints (which should offer the same level of support) receive opposing satisfaction rates, you can use this as a new line of inquiry. You could review customer feedback for both channels and discover why one service channel is rated better.

Drawing conclusions

Reporting on survey results and drawing clear conclusions comes back to understanding the story that your data tells. Examining the patterns you’ve identified in your data will often lead to potential conclusions. However, it’s important to understand causation and correlation before making too many assumptions.

Chapter 6

Taking action on customer feedback

No matter how thoroughly you analyze your survey results, they won’t add value to your organization if they aren’t shared with the teams who can act on them.

Which teams care about which types of survey data? Let’s find out.

Share customer feedback with program stakeholders

Your program stakeholders can turn your data into powerful insights for their teams. You don’t need an advanced system for sharing customer feedback to be successful, you just need to make sure the right people have access to your data.

Here are some key strategies you can use to communicate insights obtained from customer feedback with program stakeholders:

- Full visibility: Some colleagues just need to dive into the complete data. If you want them to be able to analyze the responses however they see fit, just share the entire survey with them.

- Filters: Other colleagues may not have the time to dig through the data. Use filters to show them only the information they care about.

- Visualizations: Are you planning to use your results in presentations or handouts? You can export any chart from your data as a PDF or as a PowerPoint to easily use in meetings.

- Stats-first: Data-savvy colleagues might want to analyze your results more deeply. Try exporting your responses and sharing them as an SPSS file or a CSV file.

- Dashboard: Practically anyone can benefit from you presenting your data in an intuitive, easy-to-read Results Dashboard.

- CRM: If you’ve integrated SurveyMonkey with a CRM like Salesforce, it can automatically send relevant colleagues a notification when a customer responds.

- Integrate survey data: Integrate SurveyMonkey with Tableau, Marketo, and more so you can share the responses on a platform your colleagues already use.

Prioritize action items

While all customer feedback is important, your response won’t be the same for every customer. Some feedback requires immediate attention. For example, if a customer has stated that they cannot access a service they’ve paid for, your support team should fix the issue as quickly as possible. On the other hand, some feedback is worth replying to but doesn’t include the same sense of urgency.

By understanding the scope of actions you can take based on feedback, you can prioritize where you first need to respond. Determine how a response aligns with your customer service and business goals. If a quick response enhances the impact of your customer service, it should be a top priority.

Chapter 7

Closing the feedback loop

If your business wants to continue receiving feedback, you have to respond to the feedback coming in and outline the actionable steps you’re taking to address it. Feedback loops only work when you respond to feedback, acknowledge your customers’ comments, and take action.

Closing the customer feedback loop:

- Builds trust: Responding to customers improves their relationship with your business.

- Improves credibility: Showing that your company is listening to customer feedback enhances your credibility and makes you more appealing to customers.

- Enhances customer experiences: Taking actionable steps to improve the customer experience based on the feedback will help boost customer satisfaction.

There’s a reason that 52% of customer experience professionals want to invest more in customer feedback programs. Closing the feedback loop is a win-win for everyone.

Closing the feedback loop with internal stakeholders

Closing the feedback loop with internal stakeholders, whether from marketing, product, sales, or leadership, is crucial for improving their respective business areas.

Involving internal stakeholders in the feedback process enables teams to understand how to effectively act on customer feedback. Communicate clear plans for how each team should address feedback and highlight the impact of their actions on fostering customer loyalty and enhancing the employee experience.

Closing the feedback loop with customers

On your survey’s end page (the page they see after submitting the survey), let every customer know how much you value their feedback. The page can detail how you manage their responses and provide examples of what you’ve done with customer feedback in the past.

How you respond to clients can change based on your goals, customer base, and business size. Additionally, positive feedback will require a different response than negative feedback.

Here’s an example of how you could respond to positive feedback:

- Hi {Name}, thanks for your feedback—we’re so glad our support team made a great impression! Love our service? Refer a friend and you’ll both get 3 free months when they sign up. Have a great day!

Here’s an example of how you could respond to negative feedback:

- Hi {Name}, thanks for reaching out about pricing—we hear you! We're launching a more affordable tier this July. In the meantime, enjoy a one-month trial at a discounted rate so you can experience the value firsthand. Looking forward to your thoughts!

Chapter 8

Driving continuous CX improvement

In our final chapter, discover how to enhance your existing customer feedback programs, measure your progress, refine your strategies, and create a more robust system.

Create a customer-centric culture

Creating a customer-centric culture should be at the heart of your company’s DNA. Beyond gathering customer feedback, it's also crucial to prioritize employee feedback. Employees who feel supported are more likely to deliver exceptional customer service. By actively collecting and responding to employee feedback, you can build a more positive, productive, and engaged workplace.

Improve employee training and development

Once you understand the customer experience in more detail, you can take steps to deliver more value to clients. For example, you’ll be able to train customer-facing employees in the areas they need to improve on most, and you’ll be in a position to adjust your product roadmap to better meet your customers' needs and wants.

Providing ongoing training and development opportunities allows your employees to enhance their skills. As they become more proficient, they’ll actively deliver streamlined experiences to your customers. The more resources and training employees regularly have at their disposal, the better, especially for front-line staff who are in direct contact with your customers.

Related reading: Set your employees up for success with impactful training

Measure and monitor progress

Your job isn't over once you have a successful customer feedback program in place. It’s important to constantly measure performance metrics and feedback data to identify improvement areas.

Monitoring key customer experience metrics over time will also allow you to demonstrate the success of your customer feedback program. As your customer satisfaction rates improve over time, you take that data to your leaders to prove the utility of your CX program.

Celebrate successes and learn from missteps

When employees feel recognized for their contributions, it enhances their commitment to delivering excellent customer experiences. Similarly, embracing failures as learning opportunities rather than setbacks is vital.

By analyzing what went wrong and adjusting strategies accordingly, you can continually improve your approach and ensure long-term success in building a robust customer feedback program. These moments of reflection and growth strengthen your team's resilience and drive continuous improvement in customer satisfaction and operational efficiency.

Build a world-class customer feedback program with SurveyMonkey

Our guide has explored the full extent of how to make, refine, sustain, and improve a customer feedback program. These best practices, tips, and insights will allow you to craft a winning program that your customers, leadership, and employees love.

As you refine your employee and customer experience, you’ll receive tangible financial returns alongside happier customers. Your business can leverage SurveyMonkey to get an all-in-one platform for collecting, analyzing, and drawing actionable insights from feedback. Get started today.

Net Promoter Score and NPS are registered trademarks of Bain & Company, Inc., Fred Reichheld and Satmetrix Systems, Inc.

Ready to get started?

Discover more resources

Solutions for your role

SurveyMonkey can help you do your job better. Discover how to make a bigger impact with winning strategies, products, experiences, and more.

Automate SMS survey invites directly from Salesforce

Trigger personalized survey invites via text when Salesforce records change—and sync every response back automatically.

Customer service trends & statistics for 2026: Why consumers still trust humans over AI

79% of Americans prefer human customer service over AI. Discover why consumers still trust humans more and what this means for your 2026 strategy.

How to update your 2026 CX playbook: What millions of data points reveal

We’ve distilled SurveyMonkey Trends 2026 research into 3 customer experience priorities. Here’s what to do about tariff woes, event fatigue, and more.