Even in tough situations, people generally try to be positive and agreeable.

While that’s often a virtue in life, it isn’t when it comes to surveys. In fact, it can actually introduce bias in your survey.

Researchers—like my team here at SurveyMonkey—have demonstrated that there are several ways that agree/disagree questions can cause your respondents to answer in a way that doesn’t always reflect their true opinions. Let’s take a look.

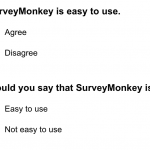

Here are two very similar questions that ask pretty much the same thing.

You might think that by asking either question, you’d get identical results, right? Respectfully, we disagree. There are two major types of problems that can arise from using agree/disagree questions.

Acquiescence bias: People have the tendency to say they like things, to say “yes” to things, or to agree with things—even if they don’t actually feel that way. Think about it this way: When your coworker asks if you like her new sweater, you’ll usually agree with her, right? Research has shown that tendency to hold true in answering survey questions, too.

Straightlining: Another way that agree/disagree questions cause problems is by making it easy to go through many questions of a survey and select the same answer every time without actually reading the options. Having multiple questions in a row with identical answer options (like agree/disagree) can bore your respondents. They may still be responding to each question, but they’re not carefully considering which response is best.

Your eyes don’t lie

Here’s new evidence that shows how little attention respondents are paying to your agree/disagree answer scale. Researchers at Stanford University in the U.S. and GESIS – Leibniz Institute for the Social Sciences in Germany used eye-tracking software to detect where respondents were looking as they answered survey questions online.

They did an experiment asking similar questions in two different formats—agree/disagree and item-specific—and they presented their results at the annual meeting of the Pacific Chapter of the American Association for Public Opinion Research.

Item-specific questions have response options that are specific to a particular question. Instead of having a one-size-fits-all scale that applies to many different agree/disagree questions, they use a scale whose wording is tailored to match the unique question wording.

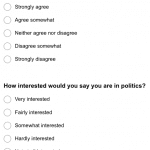

Here’s an example of a pair of questions the researchers used in their experiment. Notice how both questions ask for the same information—whether someone is interested in politics—using different question wordings and different answer formats, each with five choices.

The researchers used eye-tracking technology to track respondents’ attention to each type of question. The results show that survey-takers read and comprehend both types of questions in the same way, but they process the answer choices very differently.

The researchers measured:

- the number of fixations, or instances where someone is looking at a specific point;

- the duration of each fixation; and

- the number of re-fixations, or instances where someone switched his gaze from one answer option to a different one.

They found that respondents to the item-specific version of the question had higher fixation counts, longer fixation lengths, and more re-fixation instances than respondents to the agree-disagree version of the same question.

In plain English, that means that people answering questions with item-specific response options took more time considering each possible response, looking back and forth between options before deciding which best matched their own opinions. This is exactly what respondents should be doing as they take a survey.

What does this mean for you?

If your survey bores your respondents by asking them a series of agree/disagree questions, this research demonstrates that they aren’t going to be particularly focused or conscientious when they’re responding. And what’s the point of asking a question if you know your respondents aren’t really going to try to answer it well?

Item-specific questions require a little more effort to answer than agree/disagree questions do. But don’t worry—the benefits outweigh the negatives. More effort means more concentration and more care while responding, which in turn leads to more well-considered responses and more accurate data.

SurveyMonkey works hard to ensure everything about the survey-making and survey-taking experience is easy; you can trust us when we say that in this case, it’s better to make things just a tiny bit more difficult.

This information comes from a study by Jan Karem Höhne (Stanford University, USA) and Timo Lenzner (GESIS–Leibniz Institute for the Social Sciences in Germany).